Promptfoo

Promptfoo is an open-source tool for testing and evaluating LLM outputs. Compare models, run red-team assessments, and catch regressions with a built-in web UI.

Services

promptfoo

ghcr.io/promptfoo/promptfoo:0.121.3Promptfoo

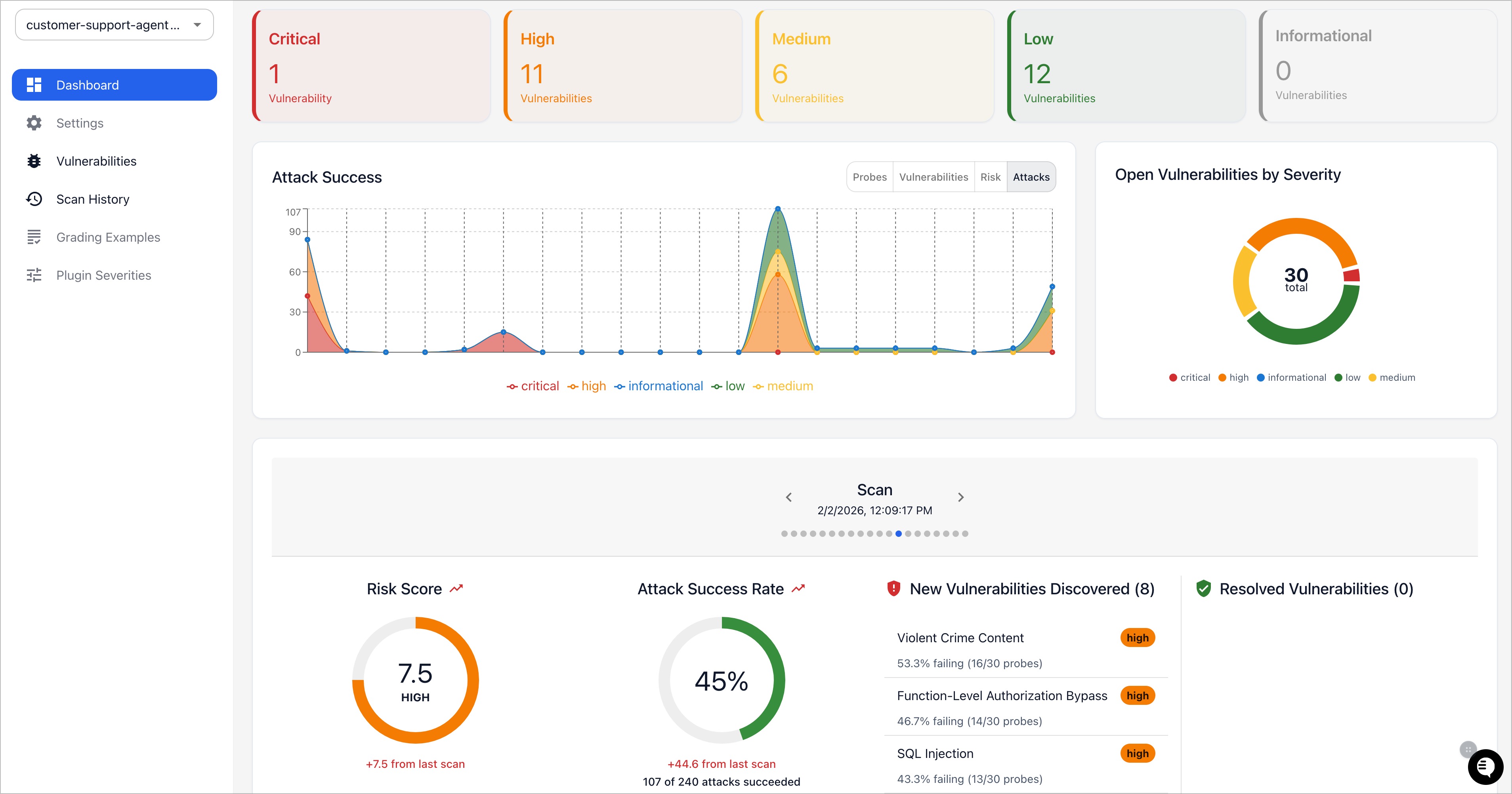

Open-source LLM testing and evaluation framework. Compare prompt quality across models, detect regressions, and run red-team security assessments — all from a web dashboard.

What You Can Do After Deployment

- Open your domain — the Promptfoo web UI loads immediately

- Create evaluations — define test cases and compare outputs across GPT-4, Claude, Llama, and others

- Run red-team assessments — automatically probe models for prompt injection and jailbreak vulnerabilities

- View results — side-by-side comparison tables with pass/fail scoring

- Export reports — share evaluation results with your team

Use Cases

- Prompt engineering and A/B testing

- Model selection and benchmarking

- LLM security and red-teaming

- CI/CD integration for prompt regression testing

- Cost and latency comparison across providers

License

MIT — GitHub